GrandSLAM, our localization and mapping software, has commercial grade performance and can exploit the full potential of a large number of sensors to bring value no-matter your configuration or use case.

Kudan’s simultaneous localization and mapping software, GrandSLAM, features commercial-grade performance, exploiting the full potential of a large number of sensors to bring value no matter your configuration or use case.

Demo videos

Demo videos available on our YouTube channel

GrandSLAM

Simultaneous localization and mapping/ SLAM software with all sensors for maximum performance

- Accepts a wide range of sensor data such as camera, ToF, Lidar, IMU, GNSS, and wheel odometry. A camera or Lidar can currently be used as the primary sensor.

- A camera or Lidar can currently be used as the primary sensor, providing commercial-grade performance with High accuracy, low processing time, maximum robustness, huge map scalability, robust system stability, low integration complexity, and cross-platform portability.

- Unlocks the full potential of your system with significant performance gains against alternative approaches.

Sensor configuration freedom and incomparable performance on your hardware

- GrandSLAM is our most flexible system to date, allowing complete flexibility of sensor requirements and configuration, with no need to use expensive or complex sensors.

- Kudan has resolved one of the most critical multi-sensor system pain points, with technology that can enable synchronisation and calibration between all sensors.

Visual SLAM

Commercial grade, faster processing, lower memory, higher accuracy, and greater robustness

- Kudan’s proprietary visual SLAM software has been extensively developed and tested for use in commercial settings.

- Open source and other commercial algorithms struggle in many common use cases and scenarios.

- KudanSLAM achieves much faster processing time, higher accuracy and more robustness in dynamic situations.

Reference datasets are available here (Dataset 1 – a visual sequence targeting indoor robots, Dataset 2 – a visual sequence targeting AR/VR)

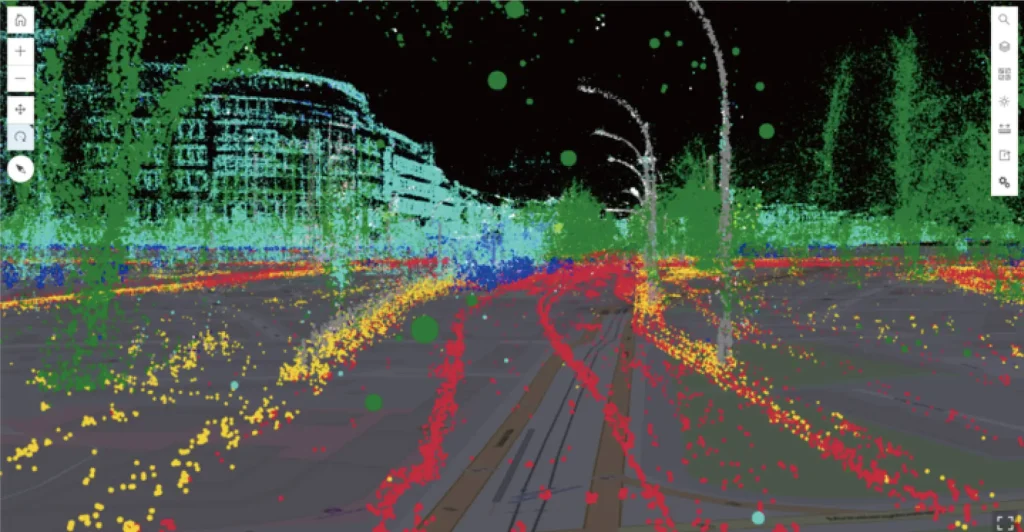

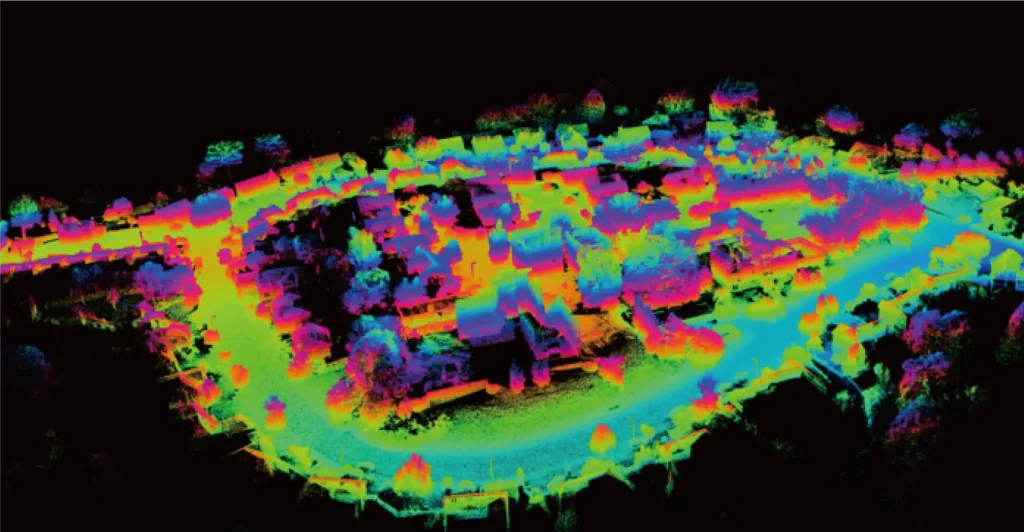

Lidar SLAM

Overcome typical Lidar localization and mapping problems with higher accuracy, reduced map size, and lower latency

- Leverages our deep expertise in visual SLAM to reach groundbreaking performance, including 1cm-level accuracy, and greater than 300x lighter map size over raw Lidar data.

- Almost zero pose estimation latency when combining with an IMU.

- Compatible with many different types of Lidar technology such as, spinning, solid state, and prizm based.

- Ability to correct motion blur in the Lidar data stream while mapping resulting in sharper point clouds and improved post AI process such as object recognition

- Reference dataset is available here

(Dataset – a dataset targeting autonomous driving, outdoor robotics: coming soon)

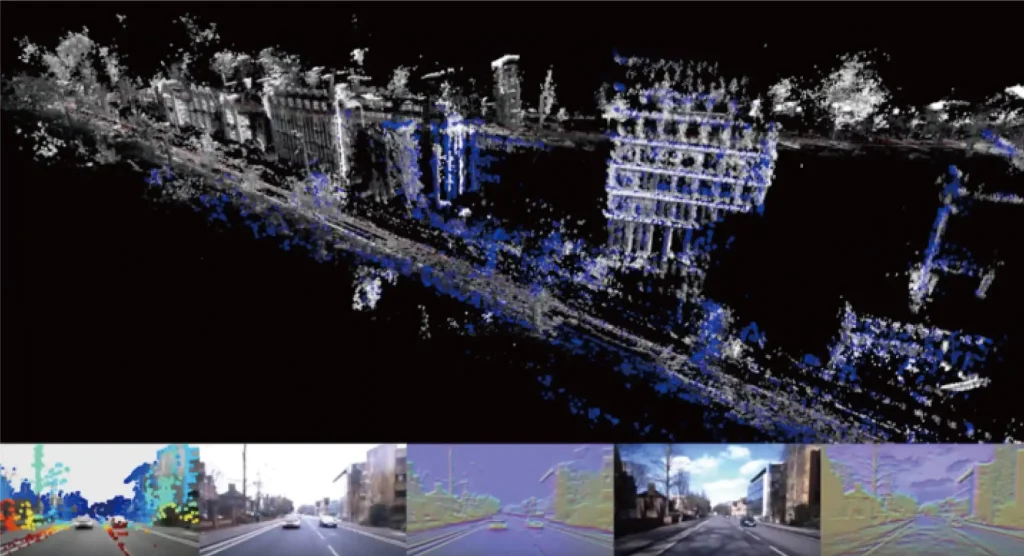

SLAM X AI

Artisense’s GN-net relocalization: AI-enabled (re)localization that is incredibly robust to scenery changes

- Overcomes the challenge of localization, and mapping a vehicle or machine when the scenery or conditions change over time.

- Uses “deep features” identified by AI for matching correct locations even when their appearance is different.

- SLAM mapping can update automatically to include any changes that are detected.

Tight coupling and Time synchronization

Integrate all the sensor information into one system rather than just “filtering” them with accurate time synchronization

- Common sensor fusion approach is a “loose-coupling” of sensors, while Kudan and Artisense use “tight-coupling”.

- The Kudan group has developed the only system that uses tight-coupling of all sensors including camera, Lidar, GNSS and IMU.

- Problematic issues of proper time synchronization are solved with micro-second level synchronization between all sensors.

- Unparalleled robustness and accuracy for a wide range of use cases.