Previous Article:

Camera Calibration for SLAM (1/3)

Camera Calibration for SLAM (2/3)

Calibrating stereo extrinsics

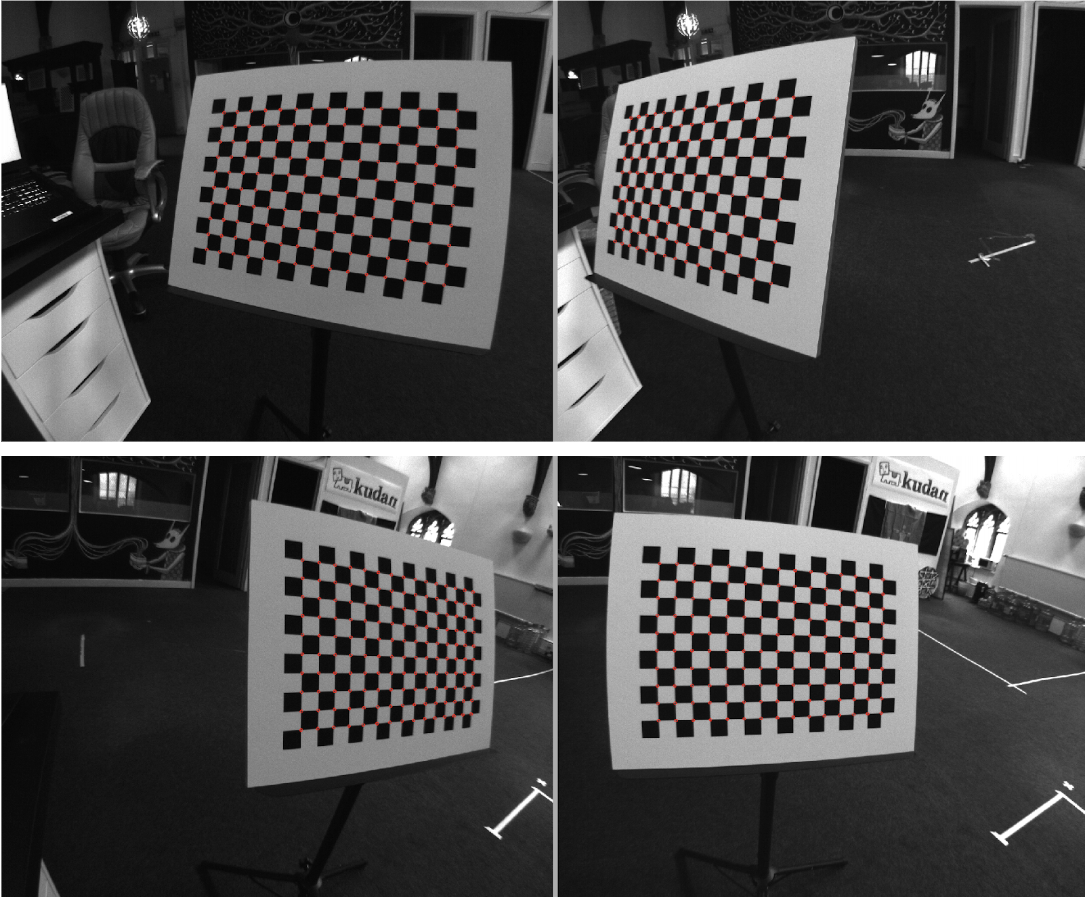

Once the intrinsics are calibrated, we have to calibrate extrinsics in the stereo case. For this is important to use image pairs captured at exactly the same time in which the internal chessboard corners can be reliably detected.

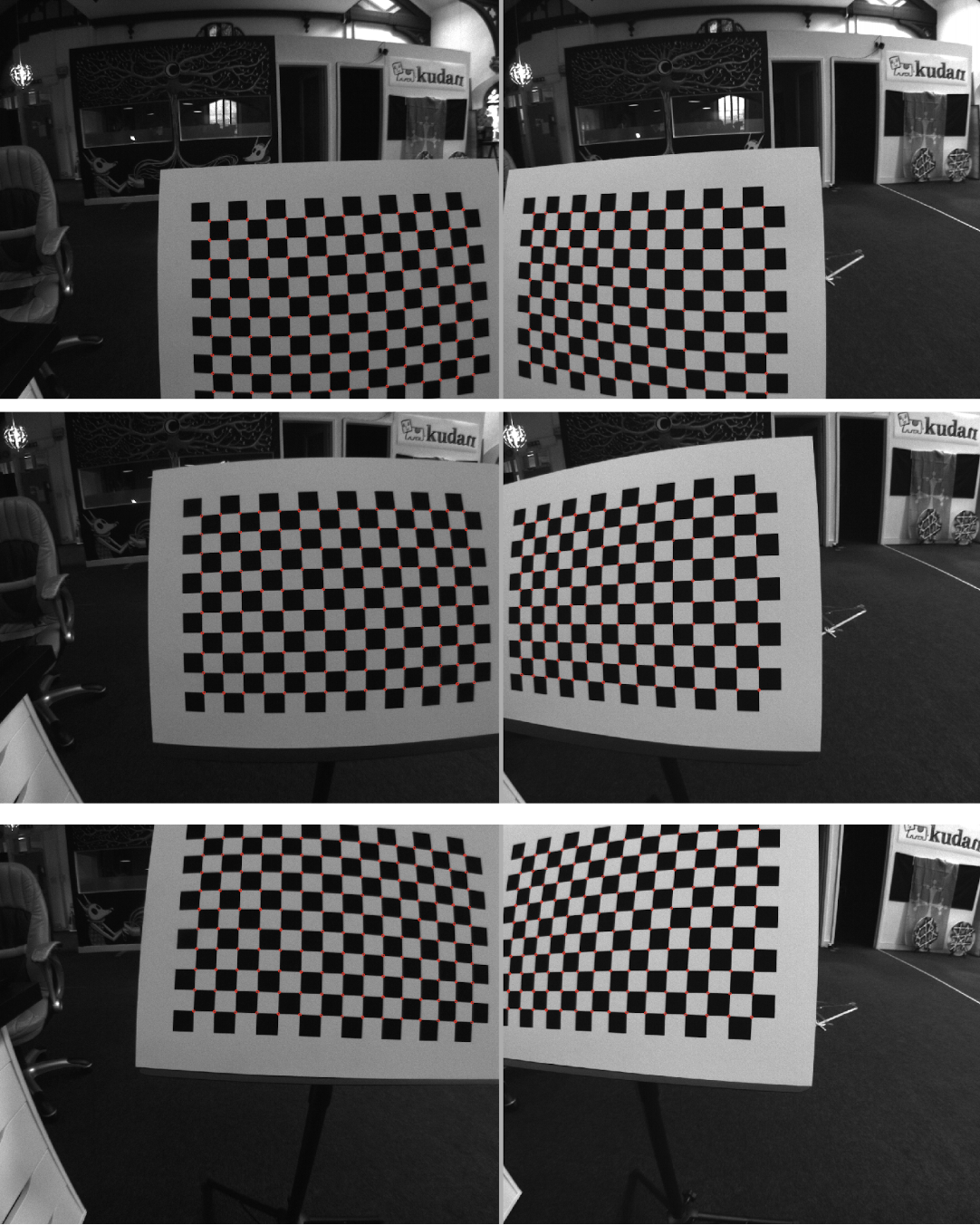

Coverage and diversity of views is important, like in the extrinsics case, but for extrinsics we usually cannot really cover the left part of the left image together with the right part of the right image due to view disparity.

We recommend trying to keep the chessboard as close as possible with respect to it being visible from both views and cover different vertical offsets in this manner:

Once this basic views are covered we can try to increase accuracy by introducing some slantness from the sides:

Care should be taken in not having an extreme slant angle, because that can negatively impact the internal chessboard corner detection.

Similarly to the intrinsics case, more diverse views increase accuracy at the cost of computation time and possibility of corner mis-detection, also for the same reasons we should take care in the correctness of the corner detection, in not repeating the same view in more than an image pair.

Extrinsic RMS errors are ideally minimised, like in the intrinsics case. For the extrinsic case they denote the average vertical offset of a feature point between left and right camera once the undistortion and rectification are applied. It’s important to notice that real-time stereo algorithm like SLAM do rely quite heavily on this vertical offset to not be more than a fraction of a pixel, because otherwise computation of map depth points is compromised. In practice, if you have an extrinsics RMS error close to one pixel or more, it’s a sign something went wrong in the calibration.

Understanding the parameters

Once calibration process is finished we have the following parameters:

Intrinsic parameters:

●Focal length: fx, fy

●Principal point: cx, cy

●Radial distortion: k1, k2, k3, k4, k5, k6

●Tangential distortion: p1, p2

In the case of monocular calibration the above is all we have, in the case of stereo we will have a set of intrinsics parameters for each camera plus a set of extrinsics parameters:

●Translation vector (3x1) from right camera to left camera

●Rotation matrix (3x3) to rectify cameras.

Focal length is proportional to both the field of view and to the width of the images, vertical and horizontal values should be similar, and values between left and right camera should be similar as well.

Principal point should be approximately the center of the image.

Unfortunately, it's basically impossible to judge distortion parameters just by looking at them, because they are factors and ratios in quite complicated formulas (see link for more details). For example it is possible that the absolute numbers in the radial distortion coefficients are different between left and right camera, but it is possible the first and fourth distortion parameters are roughly the same ratio between them, same for the second and fifth parameters, and in that case they should yield similar overall undistortion mappings.

The translation vector should have a norm similar to the baseline between left and right cameras, it depends on the square size given in input to the extrinsics calibration and is in the same units.

The rotation matrix should be close to the identity matrix in the case of properly-assembled stereo rigs.

Other than those general consideration, it’s difficult to judge calibration quality by looking at its numbers, to properly verify that a calibration is correct. there are two distinct kinds of observations to make on the images after the undistortion and rectification are applied:

●Straight lines in the real world should look straight in the view. If a calibration is imprecise in the distortion parameters, straight lines will curve close to the edges.

●The same visual features should appear in the same scanline between left and right images. A poor stereo calibration will have a vertical disparity somewhere in the images between the same physical points.

It's not very difficult to visually check those conditions on corrected images, but measures should be repeated in different points of the images, possibly looking at different distances from the cameras.

Toolboxes for camera calibration

The above guidelines are independent from the specific toolbox used. Possible free options in terms of stereo calibration toolbox are:

●OpenCV, available from: https://opencv.org/

●Jean-Yves Bouguet’s Camera Calibration Toolbox for Matlab, available from: http://www.vision.caltech.edu/bouguetj/calib_doc/

●The DLR Camera Calibration Toolbox, available from: http://www.robotic.dlr.de/callab/