We have been extensively writing about Simultaneous Localization (SLAM) and Mapping, starting from the basics to different types of SLAMs and how you could select the sensors such as camera and lidar.

But then the missing piece is, with different types of SLAMs in the market, how can we evaluate SLAM? When we say performance, it combines that of relevant hardware and the SLAM software.

Whereas dozens of different techniques to tackle the SLAM problem exist, there is no gold standard for comparing the results of different SLAM algorithms [1]. Still, there are certain aspects you should be aware of when evaluating SLAM, which are common across every SLAM system.

First, we’ll look at three essential steps you need to prepare for the evaluation phase. Then we’ll analyze five different metrics that you may evaluate the SLAM system on. Finally, we’ll close with four different scenarios to test to ensure the system’s robustness.

3 vital things to prepare before you evaluate

So you want to evaluate a SLAM system — pause. You need to prepare certain things before evaluating any SLAM system to ensure that the results are accurate and meaningful.

1. Pick the right locations

We often see people pick office areas or hallways to evaluate SLAM systems. While this may be convenient, you should ask yourself: Will the chosen environment resemble an actual environment you would use the SLAM system?[2] For example of Visual SLAM, lighting conditions, time of the day, and magnitude of scenery changes expected. These factors need to be well designed.

In addition, it’s not “locction” but you also need to decide what robot movements you want to test with. Are you interested in SLAM performance at different speeds or when it moves long distances or makes turns?

2. Check your prerequisites for hardware

To evaluate SLAM, you need to have some processors and sensors. People tend to use an existing camera, an affordable processor, and other additional sensors such as IMU and wheel odometry in most cases.

You’ll have to consciously decide if these are the specifications of the processors and sensors that you can afford, even if it means compromising on some critical metrics. It’s crucial to take a step back and understand which hardware you’d use and its relevant impact in the evaluation phase. Also please consider each spec of the sensor carefully, especially camera or 3D Lidar. (Please see how to choose the sensor in our past articles show at the end of this)

If you have some flexibility for hardware choices, we usually recommend to start with higher spec ones as it’s much easier to estimate performance with lower spec hardware bsed on the good result with higher one.

3. Have a reliable “Ground truth”

Ground truth refers to the data you can use as a reference to compare SLAM performance with.

To measure the error of SLAM — you need something far more accurate than SLAM as a benchmark. You can use a laser tracker, motion capture, or INS (Inertial Navigation System) as the ground truth. Each system has pros and cons. For example, motion capture can only handle a limited area (such as 10m x 10m). Laser trackers require line-of-sight to keep tracking. INS systems requires RTK-GNSS system, which is not available indoor.

We have seen a typical pitfall that time synchronization between ground truth and other sensors is inaccurate. There are ways to solve it later but it’s tedious and quite manual. This makes the whole evaluation tricky and unreliable. So it’s also a must to ensure that the data recorded is reliable.

5 metrics to evaluate SLAM performance

We know that every project is unique; however, you could consider these five metrics to evaluate most SLAM systems.

1. Absolute accuracy

How accurately does the pose output reflect the true position of the robot?

Absolute accuracy focuses on the completeness of the map and alignment with the real world [3]. It’s essential, especially when you want to overlay another separately created existing map (e.g., 2D CAD floor plan) with a SLAM map. If absolute accuracy is insufficient, the overlay is likely to be inaccurate and have adverse effects.

When overlays are inaccurate, it’s challenging to set no-entry zones for robots.

2. Relative accuracy/ Repeatability

How accurately can the robot arrive at the same position repeatedly?

Why is this vital? Through a few examples of autonomous mobile robot (AMR) use cases, let us explain.

Consider a use case of robots docking to a charging station or loading or unloading a pallet. These scenarios only allow a maximum of about 1cm relative accuracy. Now when we have already instructed a robot to arrive at point A, and it only manages to come close to point A, the robot will not be able to pick a pallet reliably, resulting in a failed use case.

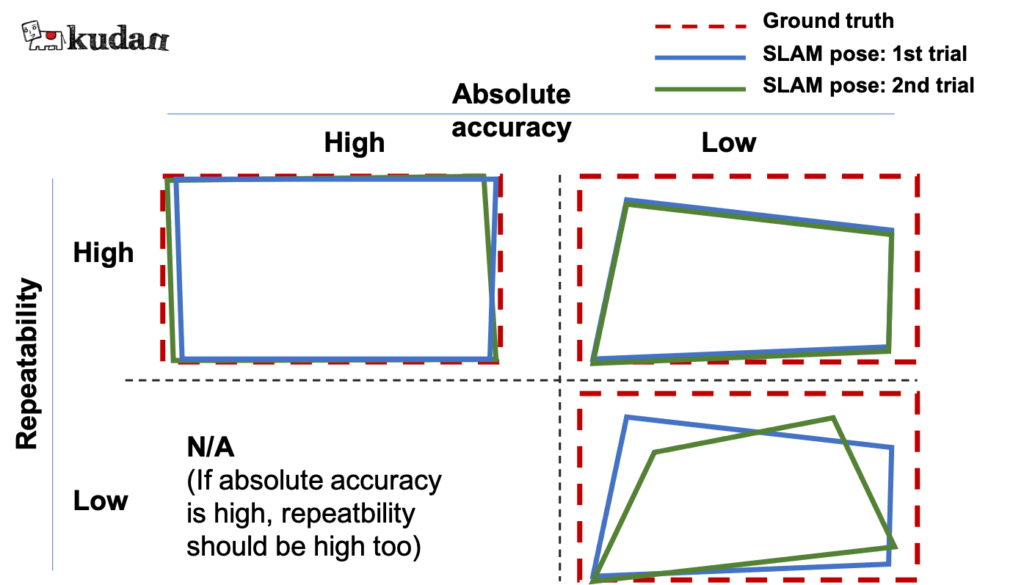

Figure 2: Absolute accuracy and repeatability

While absolute accuracy is crucial, repeatable accuracy is given priority for improvement due to the nature of its impact.

3. Relocalization capability (especially forklift)

How accurately can the robot recognize its position on the map?

Generally, AMRs start from specific places, and they can easily recognize their location as soon as they start the operation. However, there are a few applications where manual drives are involved while the system is switched off.

The robot now has no option but to recognize its precise position at an unknown location which is common in autonomous forklift applications.

This problem is called the “kidnapped robot problem”. How can we do this evaluation?

- Create a map with a SLAM system.

- Move a robot without turning on the localization system to a few places where you know the exact location.

- Turn on the localization system and see if the robot can recognize the place and how accurate it is.

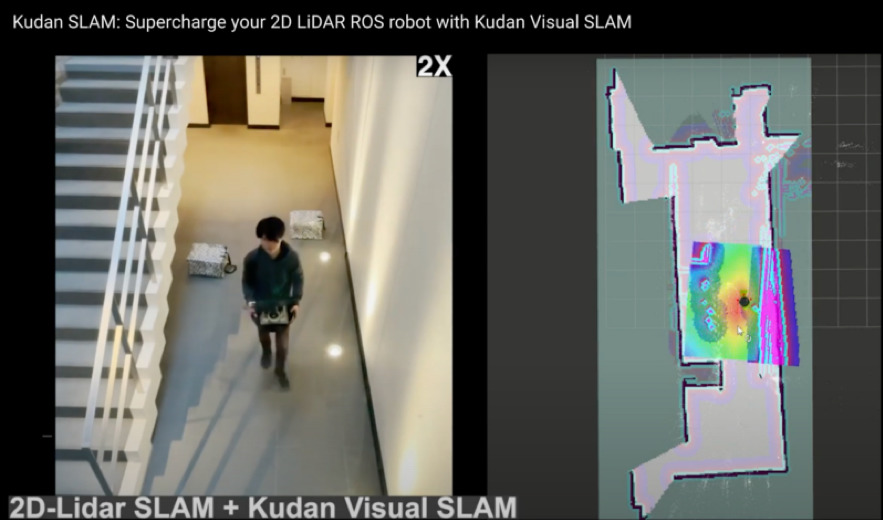

This demo video compares 2D-Lidar SLAM and 2D-Lidar+Kudan visual SLAM on this specific problem.

Figure 3: A robot is being kidnapped by a person to test relocalization performance

4. Scalability

How can you use the SLAM software at your expected scale?

SLAM systems that work best for a use case and a scale may not be the best for another use case with a varying scale.

Consider the best SLAM for consumer cleaning robots; it isn’t likely the best for industrial intralogistics AMRs. For consumer robots, SLAM needs to be optimized for tighter processing requirements, while the SLAM for industrial AMRs needs to have robustness in large open spaces.

If you test SLAM software in a smaller space — think about performance and usability beyond it.

Questions to ponder are: How does the map size look like when the mapped area gets larger? How easily can we share a map created by a vehicle with similar models and even share it with different models?

5. Processor usage/ Memory usage

Does your system work well within the available processing and memory resources?

It may be essential to have a lighter processing and memory usage — but this is secondary to the system’s robustness and accuracy. Once the accuracy metrics are met, you may prefer a system that is light on memory and processor usage.

4 scenarios for ensuring the robustness of SLAM

One of the key advantages we expect from SLAM is its robustness — maintaining sufficient accuracy in multiple scenarios, including challenging ones.

To ensure the SLAM system is robust enough, you may choose to test 4 typical scenarios.

1. Static

The selected location should be the most representative scenery for your application. This scenery can be used for creating a base map for localization in other scenarios.

Through this scenario, you will understand the SLAM’s ideal performance in straightforward situations.

2. Dynamic objects in a scene

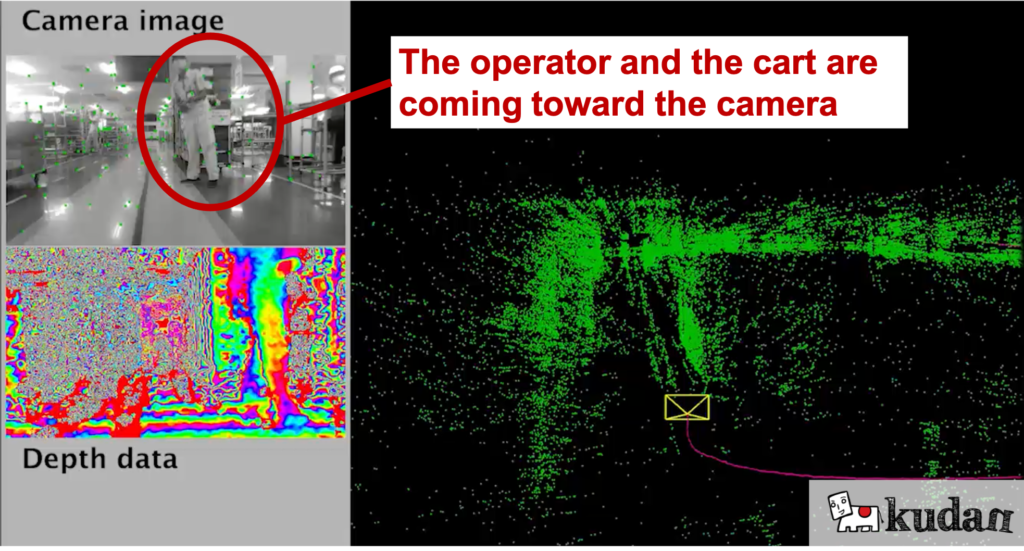

Having some dynamic objects in the field of view of the camera or lidar is one of the challenging yet common scenarios.

SLAM assumes objects in the field of view are static and calculate the movement of the sensor (some advanced SLAM can handle dynamic objects); therefore, dynamic objects confuse the SLAM to generate inaccurate poses. In principle, if at any point more than 50% of the sensed environment is dynamic, the system will follow the dynamic part, ruining the map and/or getting lost.

Figure 4: Example of dynamic objects in a factory scene

This scenario allows you to pressure-test how far SLAM can maintain localization with dynamic objects in the field of view.

3. Scenery changes (pallet moved, lighting changes)

It is challenging for the SLAM systems when the scenery changes. An example would be sceneries with some pallets and sometimes without, but the robot is still required to track its position. In principle, if at any moment the area sensed is more than 50% "different" from the map, things are going to go wrong. (Kudan SLAM has a way to perform well in these scenarios though).

Also, warehouse or industrial facilities with windows may appear different due to lighting changes, so testing the SLAM against these changes would be meaningful.

Figure 5: Example of scenery changes

4. Feature-poor environments

We come across scenarios with limited feature points, such as a narrow corridor with white walls. Without any secondary backup sensor system, complete or near-complete lack of features results in loss of tracking in ANY SLM system.

If you expect robots to keep tracking such feature-poor scenarios, it is essential to test how far the SLAM can maintain sufficient accuracy.

Figure 6: Example of feature-poor scenes

Final words

This article aims to give you in-depth technical information that is nowhere available on the internet. We looked at several preparation steps, evaluation metrics, and scenarios.

You may also have wondered about metrics such as durability, usability, and compatibility with the existing system — yes, you probably need to test these, too, depending on your use cases. However, what we mentioned in the article are the most critical and common ones across most (if not all) systems.

You may still have some exceptional cases and want to evaluate the SLAM system for those. Say hi, and we’d be happy to share our thoughts on it!

Read more about the basics of SLAM and how to select the best camera or lidar, and keep an eye on this space for more SLAM-related articles.

References

[1] Rainer Kummerle, Bastian Steder, Christian Dornhege, Michael Ruhnke, Giorgio Grisetti, Cyrill Stachniss and Alexander Kleiner, “On Measuring the Accuracy of SLAM Algorithms” [PDF]

[2] Peter Aerts and Eric Demeester, “Benchmarking of 2D-Slam Algorithms” [PDF]

[3] Filatov Anton, Filatov Artyom, and Krinkin Kirill, “2D SLAM Quality Evaluation Methods” [PDF]

■For more details, please contact us from here.