Congratulations! You are a proud owner of a cutting-edge Lidar sensor.

Okay… now what?!?

In this post, we will discuss what is Lidar SLAM, when you would need Lidar SLAM, and how you can quickly evaluate if Lidar SLAM will work for your use case.

3D Lidar is the ideal tool to accurately measure and monitor environments, helping everything from autonomous last-mile delivery robots to unmanned aerial systems, to autonomous vehicles perceive and react to the world around them. Lidar is a critical component of any vision and sensing system.

However, there is a gap between sensing the data and making sense of the cumulative data.

Simultaneous Localization and Mapping (SLAM) is a method that provides devices with artificial perception, or more specifically spatial awareness. This gives the ability to systems to understand its position and orientation within an environment by sensing, creating, and constantly updating a representation of its surroundings.

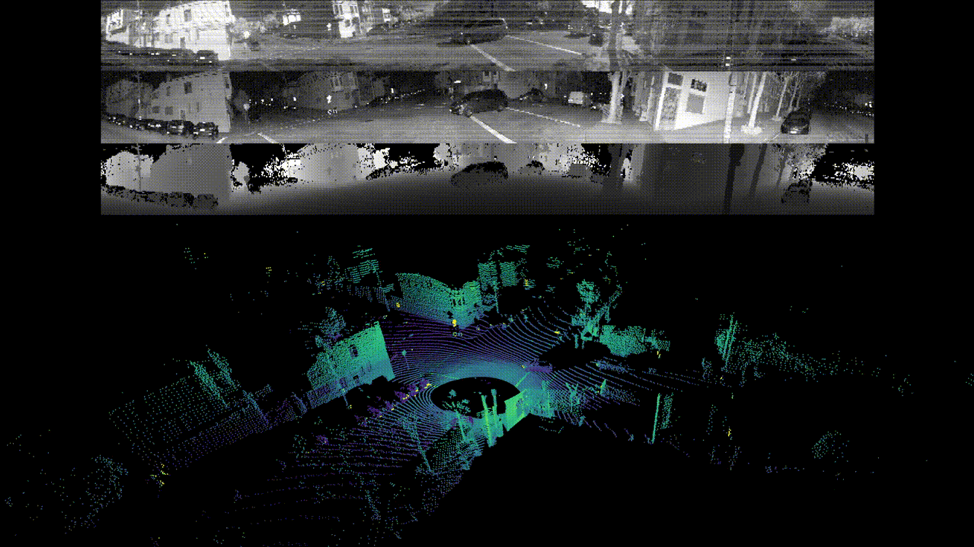

Raw data from the Lidar Sensor (OS0-128)

Raw data from the Lidar Sensor (OS0-128)

Most humans can do this well enough without much effort, but trying to get a machine to do this is another matter. Just as people will recognize landmarks and road features to create a mental map of a place they visit and know where they are, Lidar SLAM creates a point cloud representation of a map from the detected Lidar points and uses this point cloud map to determine the Lidar sensor’s position at any given time.

Just as we don’t remember every little detail of an area we visit, Lidar SLAM will use just enough of the unique features it detects to create the point cloud.

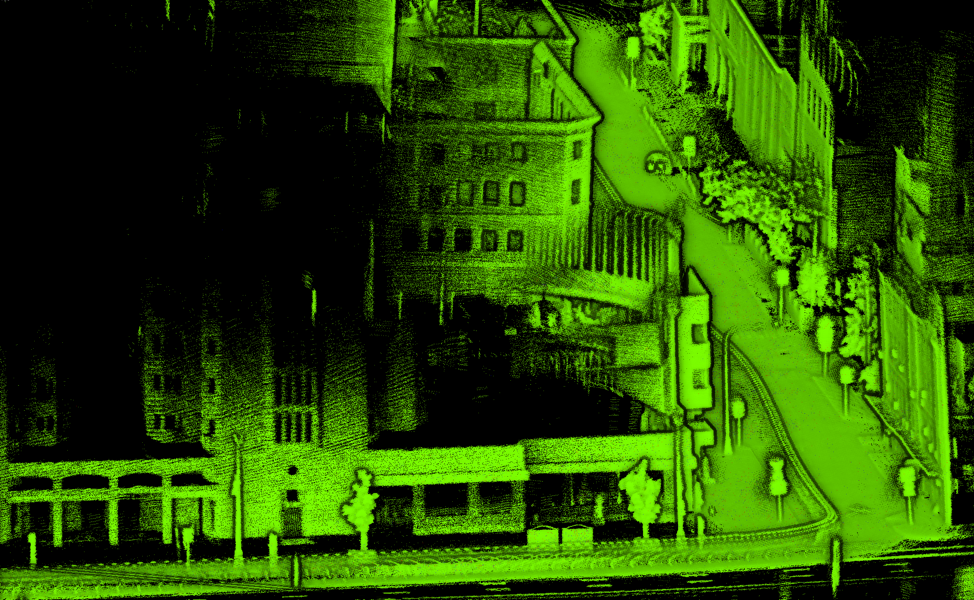

Point cloud obtained by Digital Roads with Ouster OS0-128 and processed with KdLidar

Point cloud obtained by Digital Roads with Ouster OS0-128 and processed with KdLidar

Location, Location, Location

The primary driver for whether you need Lidar SLAM in your project is directly related to how important it is for your device to know its location and position within a space.

Some examples of applications where SLAM can be critical include;

- Robotics (autonomous mobile robots): especially around navigation in known and unknown environments

- Autonomous vehicles and last-mile delivery vehicles: similar to robotics, SLAM adds the ability to navigate safely and efficiently from point A to B in varied external environments

- Mapping and surveying: while many vehicle-mounted mapping and surveying systems will incorporate high-end INS and GNSS sensors for very precise outdoor positioning, Lidar SLAM can continue to provide precise position data in indoor and GPS denied areas, such as urban canyons, tunnels, and covered structures like bridges and parking garages

Ouster Lidar sensors’ combination of high-resolution, IP68/69K ruggedness, and 360º coverage make them the perfect complement to projects requiring SLAM.

Why Kudan Lidar SLAM (KdLidar)?

Kudan is a leader in commercial SLAM software and has been creating computer vision software since 2011. They have had an industry-leading visual SLAM solution using cameras for many years, and have used that experience to create a high-performance Lidar SLAM software package. While there are open Lidar SLAM solutions available to use, getting them to be tightly integrated with your device and viable for commercial purposes can be a multi-year endeavor of customizing and tuning for your project.

In collaboration with Ouster, Kudan has made the evaluation of Lidar SLAM free and easy for users of Ouster Lidars. With any evaluation, you want to be able to make sure it is the right technology quickly and with high confidence.

Be systematic in how your evaluation is done

At the end of the day, you are looking to see if SLAM will meet the needs of your project. While each project is unique, there are several elements that may be core to many evaluation exercises.

- Accuracy: How accurate is the point cloud map against the real world?

- Repeatability accuracy: How accurately can the system position itself within a known map?

- Relocalization: How well and accurately does the system recognize the position on the map?

- Resources: Does everything work within the available computing and memory resources?

Accuracy

There are two types of accuracy when it comes to SLAM. The first is how accurate the point cloud represents the real world in terms of geometry and distance. Lidar sensors generate very accurate measurements even at a distance, and the expectation is that a point cloud generated from Lidar data will be similarly accurate. However, the reality is that all sensor data tends to drift without correction, and errors will accumulate over time. SLAM algorithms can compensate for this via mechanisms like Loop Closure and integration of other sensor data, but you should expect some deviation in any single map point to reality of up to 5cm even in ideal conditions given that Lidar sensor depth accuracy already has 2 - 3 cm error.

However, during the evaluation process, you should be looking at the general completeness of the map in terms of capturing the boundaries, obstacles and permanent fixtures, etc, and deviation to be typically under 10 cm at any given point without fusing any other sensors. Fine-tuning and fusing other sensors such as GNSS and IMU brings the error significantly lower. Fine-tuning the mapping capabilities of the system will come during the actual development of the system. Also, you might want to consider the accuracy impact of real-time processing or post-processing depending on your applications.

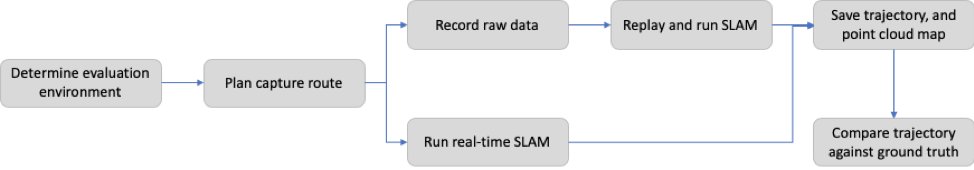

At an early stage, especially as you set up your system and tweak parameters, you may opt to use a recorded dataset to see how the different parameters affect the system performance. As you get closer to an integrated commercial product, you will likely test the system using real-time mode to ensure robust operations in different conditions.

It's good practice to build (and keep updated) a suite of recorded data that represents normal and corner cases of operations that are important to the success of your product, and to use it as part of your regression suite and validation process as development continues on the product.

Repeatability

The other type of accuracy, especially important for robotics use-cases, is a measurement of how precise or repeatable tracking of the device is. Many applications involve navigating to specific spots and routines repeatedly over time, and you want the device to be able to precisely repeat these activities - maneuvering on streets or aisles in a warehouse or factory. Repeatability measures how accurately a device positions itself against the point cloud map once it is created. Even without optimization, you should expect repeatability accuracy to be very high, with commercial deployments seeing sub-cm accuracy for repeatability.

Relocalization

There are times when a device needs to recognize its position on the map without any previous pose information either because Lidar SLAM loses track of features around it or when a device is first turned on or reset. Relocalization is the capability for the device to scan its current surroundings to figure out where it is, without having any prior information about its position.

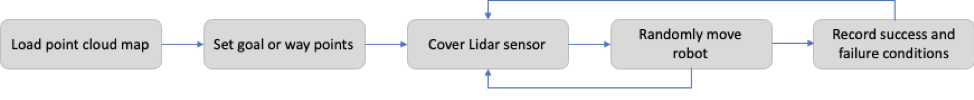

Your positioning system needs to be robust enough to continue its operation despite gaps in past trajectory and location data. One of the easiest methods to test this is to “kidnap” the device - blindfold it and move it to some new location, and see if it can figure out where it is.

The device should be able to determine its current location after “kidnapping” and continue operating from its new position. Areas of failure may indicate the need to capture more data in the area to enhance the map, or you may need to introduce routines and safe movements to reestablish tracking.

What to expect, and how to get started

You will need to manage the expectation with any evaluation. The evaluation version of Kudan Lidar SLAM (KdLidar) is intended to provide an indication of whether SLAM is suitable or needed for your project. In order to simplify and generalize the SLAM solution to work for a broad range of conditions and device setup, there will be limitations that will affect the performance and functionality of the software.

Although KdLidar can work across different platforms and is available via a comprehensive API library, the evaluation software will be provided as a ROS node encapsulating SLAM features, such as mapping and tracking. The required computing environment is Ubuntu 18.04+ running ROS Melodic.

In addition, certain complex features have been disabled, such as tighter sensor integration (IMU / INS / GNSS / Wheel Odometry) which enables more accurate and robust tracking, advanced map management features such as dense point cloud generation, map merging, map splitting, automatically aligning multiple maps from different sessions and map streaming.

If you think that KdLidar will work for your project, you can move to the development phase, with broader Kudan support, and the full library at your disposal.

To get started, visit http://www.kudan.io/ouster, request access to the free evaluation software and documentation on how to get started, as well as pointers on running the evaluation.

Happy SLAMming!

About Kudan Inc.

Kudan (Tokyo Stock Exchange securities code: 4425) is a Deep Tech research and development company specializing in algorithms to enable artificial perception (AP). As a complement to artificial intelligence (AI), AP functions allow machines to develop autonomy. Currently, Kudan uses its high-level technical innovation to explore business areas based on its milestone models established for Deep Tech, which provide wide-ranging impact on several major industrial fields. For more information, please refer to Kudan’s website at http://www.kudan.io/.